Defining Harness Engineering

Once AI agents enter production environments, it becomes common to see outcomes determined more by the environment around the model than by the model itself. The same model behaves well in project A and produces strange results in project B. When prompt tuning does not close that gap, the cause is usually the difference in the environment surrounding the agent.

In a recent internal OpenAI experiment, the environment around the agent was structured properly and the result was a production system with roughly one million lines of software generated by AI, without any manually written code.

In February 2026, this area finally got a name: harness engineering. In OpenAI’s usage, a harness is the full environment of scaffolding, constraints, and feedback loops that surrounds an AI agent such as Codex and lets it perform stable work. Repository structure, CI configuration, formatting rules, package managers, application frameworks, project instructions, external tool integration, and linters are all part of the harness. The harness is the infrastructure that helps the agent stay on course instead of drifting away from the intended path.

In that context, harness engineering refers to a shift in the engineer’s role: away from manually writing code and toward designing the environment, specifying intent clearly, and building the feedback loops that allow agents to autonomously build and maintain software.

The Relationship Between Prompts, Context, and Harness

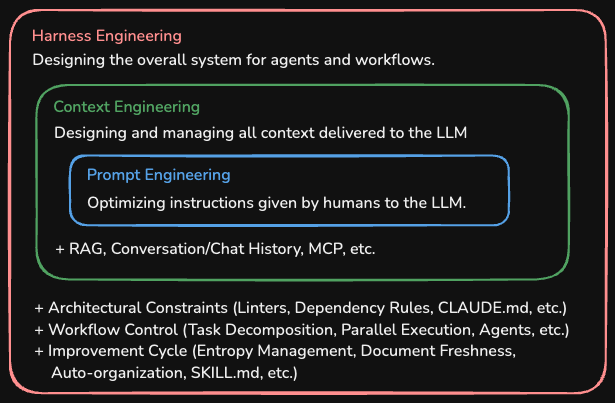

To understand harness engineering, it helps to first position it against prompt engineering and context engineering. The three can be understood as nested layers, expanding from prompt to context to harness.

These three concepts focus on different design targets when building an agent.

| Category | Core Question | Design Target |

|---|---|---|

| Prompt engineering | “What should be asked?” | The instruction text sent to the LLM |

| Context engineering | “What should be shown?” | All tokens the LLM sees at reasoning time |

| Harness engineering | “How should the whole environment be designed?” | Constraints, feedback, and operational systems outside the agent |

If prompt engineering is the command “turn right,” then context engineering is everything that helps the horse understand where it is going: the map, the road signs, and the visible terrain. Harness engineering is the larger design that includes the reins, the saddle, the fence, and the road itself so that ten horses can run safely at the same time.

People frame the relationship a little differently. The inclusion view in the table above, harness > context > prompt, is intuitive. But another useful view is that context engineering helps the model think well, while harness engineering prevents the whole system from drifting off-course. What matters in practice is not the framing itself, but the recognition that there are problems context design alone cannot solve.

From Prompt to Context, from Context to Harness

The reason these three concepts appeared in sequence is that the way people use AI changed.

During the prompt engineering phase, the interaction was usually a mostly one-shot pattern through tools like ChatGPT. Giving the model a role, breaking work into steps, and adding examples was often enough to improve the result.

That changed around the middle of 2025. When Andrej Karpathy emphasized that context engineering matters more than prompts, context engineering began to attract broader attention. The key idea was that it is not enough to phrase prompts well; the full context available to the model at reasoning time must be designed at the system level.

But once AI agents entered production settings seriously, it became clear that context design alone did not cover everything. When an agent acts autonomously across many steps, a different class of problems appears. It may ignore team conventions, generate code that violates architectural dependency directions, collide with itself under parallel execution, or gradually degrade code quality through entropy. These are not questions of “what should the model see?” They are questions of “what should the system block, measure, and repair?”

In February 2026, HashiCorp co-founder Mitchell Hashimoto used the term harness engineering in a post on his blog to describe the process of building mechanisms that prevent the same mistake from happening each time an agent fails. A few days later, OpenAI published “Harness engineering: leveraging Codex in an agent-first world”, and the term spread quickly.

Timeline of the shift

2023~2024 Peak of prompt engineering

One question, one answer structure and optimization of instructions

Mid-2025 Rise of context engineering

Karpathy's remarks helped bring broader attention, alongside LangChain and Anthropic's formal definitions

System-level context design through RAG, MCP, memory, and similar tools

2026.02 Emergence of harness engineering

Mitchell Hashimoto blog (2026.02.05)

OpenAI field report (2026.02.11)

Scope expands to full environment design for the agent

Components of a Harness

A harness is the sum of multiple mechanisms that operate outside the agent. The major parts can be organized like this.

Context Files

These are project instruction files such as CLAUDE.md, AGENTS.md, and .cursorrules.

They are documents the agent reads when starting work and usually contain project structure, coding rules, and naming conventions.

OpenAI emphasizes that they should act as the system of record.

Undocumented knowledge that only exists in Slack threads or in people’s heads is inaccessible to the agent, so architectural decisions and execution plans need to exist as repository documents such as Markdown files.

OpenAI’s experiment also found that the early strategy of keeping everything in one giant AGENTS.md predictably failed.

Because the context window is a scarce resource, a huge instruction file made the agent miss important constraints.

If everything is described as important, the agent tends to stop following the rules and fall back to rough pattern matching.

In practice, a giant manual quickly turns into a graveyard of stale rules.

The response was to treat AGENTS.md not as an encyclopedia but as a map.

docs/

├── design-docs/

│ ├── index.md

│ └── core-beliefs.md

├── exec-plans/

│ └── tech-debt-tracker.md

├── product-specs/

└── references/

└── design-system-reference-llms.txt

When the agent reads only the instruction files that are close to the current working directory, it wastes less context-window capacity while still receiving the rules that matter.

MCP Servers

MCP, the Model Context Protocol, lets the agent access external tools and data sources. That makes it possible to read tickets from an issue tracker, automate a browser, or search internal documentation.

# Example of adding MCP servers in Claude Code

claude mcp add --transport http jira https://mcp.jira.example.com/mcp

claude mcp add --transport stdio github -- npx -y @modelcontextprotocol/server-github

But connecting many MCP servers is not always better. Tool definitions themselves consume tokens, so in practice it is more effective to connect only the MCP servers needed for the current work. This is also why MCP setup and the things to watch for deserves its own discussion.

Skill Files

Agent Skills

document repeated work procedures.

If a SKILL.md file contains the instructions for a recurring task, the agent can read it when needed and carry out the workflow.

Typical examples include a code-review checklist, a deployment workflow, or the preferred pattern for a specific framework.

Mechanical Enforcement and Code Garbage Collection

This is where harness engineering separates most clearly from context engineering. In environments where agents generate large amounts of code, OpenAI used custom linters and structural tests to preserve architectural consistency and taste.

The important detail is that the linter did more than return an error. When it failed, the error message was designed to inject correction instructions directly into the agent’s context. For example, dependency direction was enforced mechanically like this.

Types -> Config -> Repo -> Service -> Runtime -> UI

If generated code violated that direction, the linter blocked it and immediately activated a feedback loop so the agent could repair the code itself. Writing a rule in documentation still allows the agent to violate it. Enforcing it at the system level prevents that up front.

Entropy and garbage collection As agents generate code at scale, they inevitably replicate poor patterns and accumulate technical debt, what some call AI slope or entropy. Instead of relying on people to clean this up by hand in large periodic sweeps, OpenAI ran background agents that continuously scanned for divergence in code and documents and opened refactoring PRs. That automated code garbage collection is also part of harness design.

Observability tools belong to the harness as well. If the agent can access runtime information such as logs through LogQL, metrics through PromQL, or DOM snapshots, it gains the ability to debug and validate the code it produced.

Experiments Where the Harness Changed the Result

The effect of the harness can still feel abstract unless it is tied to concrete results. Three experiments published in early 2026 show that effect clearly.

OpenAI Codex: one million lines, zero manual code

This is the internal OpenAI team experiment mentioned earlier. Over roughly five months starting in August 2025, the team built production software using only Codex agents. The team started with three engineers and later grew to seven. About 1,500 pull requests were merged. No code was written by hand at all, and the generated code volume reached roughly one million lines. The team reported being able to move roughly 10x faster than with manual development.

The important point is that it did not work well from the beginning. Early productivity was low because of missing environment setup, weak tool integration, and poor recovery logic. Performance rose sharply only as the harness was improved step by step. Inside the team, the role of the engineers was described as becoming primarily about making the agent useful.

Summary of the OpenAI Codex experiment

Period : 2025.08 ~ 2026.01 (about 5 months)

Team size : 3 -> 7

Manual code : 0 lines

Generated code: about one million lines

Pull requests : about 1,500 merged

Average PR/day: 3.5 per engineer

Speed estimate: about 10x faster than manual development

Hashline: 6.7% to 68.3% by changing one tool format

In February 2026, security researcher Can Boluk published an experiment called Hashline. He changed only the edit format used by agents across 16 LLMs. Instead of requiring precise text reproduction or structured diffs, Hashline attached a 2-3 character hash to each line.

1:a3|function hello() {

2:f1| return "world";

3:0e|}

If the model can refer to a line by a hash, such as “replace line 2:f1,” editing becomes possible without reproducing exact strings. The result was dramatic. For the Grok Code Fast 1 model, the benchmark score jumped from 6.7% to 68.3%. Average output tokens across all models also dropped by about 20%. The model weights did not change. Only the harness did.

LangChain: from around 30th place to around 5th

LangChain tested its coding agent on the

Terminal Bench 2.0 benchmark.

Keeping the model fixed at gpt-5.2-codex,

the team improved only the harness, and the score rose from 52.8% to 66.5%, a gain of 13.7 points.

In leaderboard terms, that moved the system from around 30th place to around 5th.

The main improvement was a tool that automatically analyzed failure patterns. The team gathered failure causes from LangSmith traces and added a self-verification loop for the agent. The system prompt, tools, and middleware were all tuning targets.

All three experiments point to the same conclusion. Before changing the model, inspect the harness. It often provides the highest ROI.

Where to Start

When applying harness engineering for the first time, there is no need to build every mechanism at once. The following three starting points usually produce the fastest practical return.

1) Write a context file

Create CLAUDE.md or AGENTS.md at the project root and include the project structure, build commands, and coding rules.

Start small, then add rules when the agent repeatedly fails in the same place.

This is the same pattern Mitchell Hashimoto described:

every time the agent makes a mistake, add the instruction that prevents that mistake from repeating.

# Example CLAUDE.md (project root)

## Build

- Run the full build with `./gradlew build`

- Run tests with `./gradlew test`

## Coding rules

- Package dependency direction: domain -> application -> infrastructure

- infrastructure does not reference domain directly

- Entities use lazy loading by default, and N+1 issues are solved with fetch join

## Commits

- Write commit messages in Korean, without a trailing period

2) Connect MCP selectively

If the agent often refers to an external system, connect that system through MCP. Typical examples are issue trackers, wikis, and monitoring systems. But only the necessary ones should be connected, otherwise tokens are wasted.

3) Connect linters and CI

Add an automatic mechanism that verifies whether code generated by the agent follows the existing architectural rules. If a linter and CI already exist, simply making their outputs readable by the agent often helps. That creates a feedback loop where the agent sees the CI failure and repairs the problem itself.

Because every team has a different tool stack and workflow, the exact form of the harness will differ. What matters is the method: observe the places where the agent fails repeatedly, and add harness at those points.

The Bottleneck Is the Environment, Not the Model

One observation sits behind the rise of harness engineering: the bottleneck in agent performance is often not model intelligence, but environment design.

I have also spent plenty of time comparing GPT, Gemini, and Claude to see which model gives better results. Of course, model differences are real. But in practice, the larger difference often comes less from the model itself and more from the execution environment built around it.

Model quality is converging upward quickly. One GPT release moves ahead, Claude catches up, and Gemini follows not long after. Harness, by contrast, is an asset each team has to build itself. Tool integration, recovery logic, architectural constraints, and document structure do not arrive in one universal package the way a general model does. That is why investment in the harness now is likely to produce a real productivity gap later.

Of course, progress is so fast that it is hard to confidently predict even one or two months ahead, let alone a year. So it is difficult to claim this gap will remain permanent. But at least for now, the same logic applies even to individual developers. Installing and using AI tools is no longer the hard part. What creates the difference is how well the environment around those tools is designed.

The hard part is that what looks like the right answer today can easily become a different answer tomorrow.

You Might Also Like

The harness components mentioned in this post are covered in more detail in these articles.

- Context Engineering: Designing the Full Picture Beyond Prompts

- How to Set Up MCP Servers in Claude Code and What to Watch For

- What Are Agent Skills, and How Do You Build Them?